Industrial AI must be built upstream — on trusted, contextualized infrastructure.

By Dan Harple, CEO and Founder, Context Labs

Mar 2, 2026

Without Context, AI Doesn’t Work.

If AI is going to power real-world systems — energy, capital markets, supply chains, regulatory infrastructure — trusted data becomes an operational requirement, not a theoretical one.

The most important data is data about the world. Data about the physical systems that sustain life: energy, infrastructure, supply chains, not data about websites or content. The integrity of that data will determine whether AI strengthens those systems, or whether it will reduce trust and introduce risk.

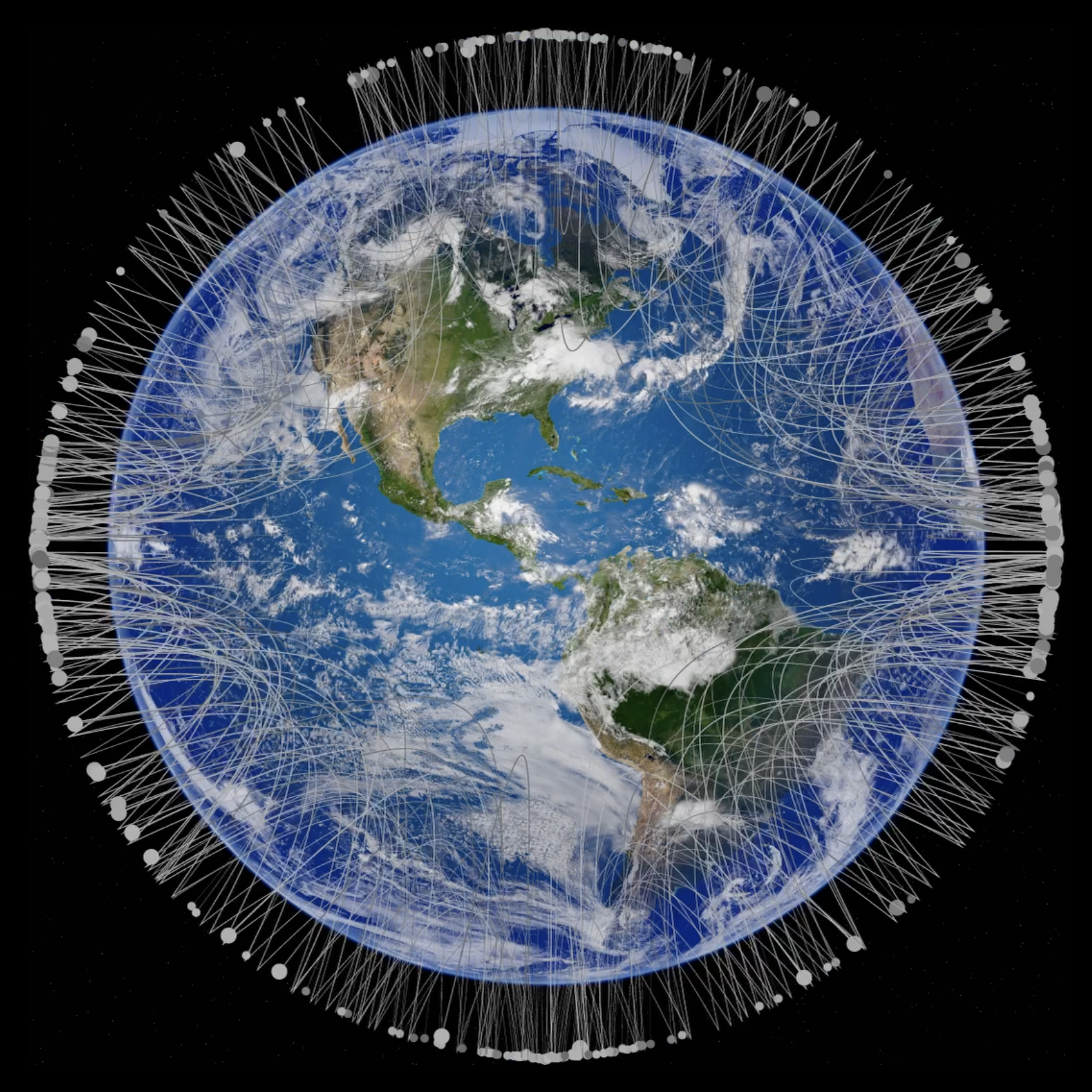

Everything is interconnected. Supply chains are complex network graphs, with nodes and edges spanning geographies, physical assets, commodities, and capital flows. That’s network science. It sits at the heart of what Context Labs does.

When I started my first company, the goal was simple: connect people. We built the early protocols that allowed people to see and speak to one another across the internet. We connected one to one. Then one to many. Then data to data. Over time, the internet became pervasive — in your car, on your phone, in your home — exactly as many of us predicted decades ago. Billions of data points flowing constantly.

But something was lost along the way. As the internet scaled, information became abundant and increasingly disconnected from origin and context. When attention became the product, information became optimized for clicks, not for truth or accuracy. Today, AI is being trained on that foundation. On a low-trust foundation, the intelligence built on top of it will be unstable.

Context Labs was born from a simple premise: restore trust to data.

“We are at an inflection point. Trillions are being committed to AI infrastructure — data centers, compute, model training.”

To trust data, you must know where it came from. You must know how it was measured, how it relates to everything else around it. Provenance. Interconnection. Context.

Without Context, AI doesn’t work.

Scale Requires Going Upstream

The world is fixated on consumer AI — chat interfaces, content generation, convenience, entertainment. That is downstream.

If you want systemic impact, you rebuild the foundations and go upstream into core infrastructure. Upstream is the energy that powers the data centers training the models. Upstream is the supply chain that determines whether the lights turn on and whether food reaches people. Upstream is where capital risk, regulatory pressure, and operational complexity converge.

Without the supply chain, nothing works.

We chose energy first because it is foundational. Energy represents the majority of climate impact globally. It is the most complex, the most regulated, the most interconnected industrial system on the planet.

Today, Context Labs processes billions of data points per day across hundreds of thousands of digital twins of physical entities. We are instrumented across roughly one-third of U.S. natural gas supply chain and we’re expanding globally.

Industrial AI should not be built on estimates developed decades ago. It must be built on empiricism — real-time, contextualized, asset grade data tied directly to equipment, time, and methodology.

Building the Factory

Think of our platform as a factory where raw, unstructured information becomes trusted data. Data flows in from satellites, drones, operational logs, and continuous monitoring systems emitting telemetry every few milliseconds. These streams are fragmented, asynchronous, and often contradictory.

“We process millions of records using agentic AI technology to contextualize data at a scale no human team could achieve.”

Without reconciliation, infrastructure is at risk — not just reputationally, but economically.

Context Labs ingests all sources and reconciles them through an industry-specific ontology, creating a digital twin of the world’s most complex and highly instrumented physical supply chains. We weave it all together – facilities, compressors, pipelines, sensors, maintenance windows, shipments flows – creating a knowledge graph.

Each customer’s knowledge graph remains segregated, traceable and auditable. We build narrow, deep, semantic LLMs — domain-specific models trained on their own private and contextualized enterprise data. We process millions of records using agentic AI technology to contextualize data at a scale no human team could achieve.

It is a living digital twin — a real-time representation of infrastructure that is capable of answering operational, regulatory, and commercial questions with traceable lineage. We deliver the digital infrastructure to scale within an environment of evolving and constantly changing regulatory and reporting requirements.

The result is infrastructure that can influence capital allocation, reduce regulatory friction, expand market access, and reshape how energy flows globally. In a world of tightening global standards — from Europe to the Middle East — empirical verification becomes a competitive advantage.

An Inflection Point for Industrial AI

We are at an inflection point. Trillions are being committed to AI infrastructure — data centers, compute, model training. But the conversation remains downstream: applications, interfaces, monetization.

If AI scales on unverified, decontextualized data feeding energy systems, commodities markets, and regulatory frameworks, the instability will not appear in a pop-up notification on your device. It will appear when shipments are rejected, when assets are mispriced, when capital is misallocated, when supply chains fail.

The risk is not that AI becomes too powerful. The risk is that it becomes deeply embedded in infrastructure without being deeply grounded in truth.

Years from now, the measure of this work will not be headlines. It will be measured by whether industrial AI became more reliable. Whether supply chains became more transparent. Whether infrastructure became more resilient — and whether markets rewarded those who built on truth.

In my first company, when someone streams a video or makes a video call, that work lives on. In this company, the work lives on when infrastructure runs — and it runs on data that can be trusted.

Let’s get the foundation right — a digital foundation for AI that is grounded and trusted.

Watch the Video

Related

Mar 2, 2026

Meet Context Labs at CERA Week

Context Labs delivers AI-ready infrastructure that turns operational and carbon data into trusted, decision-grade proof across LNG, CCS, and large-scale energy value chains. Learn more at CERA Week […]

Sep 24, 2025

Reimagining Emissions Transparency in the Energy Supply Chain

The global energy sector is at a turning point where emissions transparency is no longer of secondary concern, but a central driver of competitive commercial advantage and operational integrity. […]